GTM Engineering Playbooks: Proven Workflow Examples for Outbound, Enrichment, and Attribution

Explore GTM engineering playbooks for outbound, enrichment, attribution, and cross-functional automation with proven B2B workflow examples.

TL;DR

- GTM engineering playbooks are repeatable, living systems that connect your tools, data, and team actions into workflows that actually run every day, not dusty Notion docs nobody opens.

- The five playbooks that matter most are signal-based outbound, waterfall data enrichment, unified cross-functional routing, multi-touch attribution, and agentic AI workflows.

- Choosing between Zapier, Make, and n8n matters less than whether your team has clear workflow logic before picking a platform.

- Start with one playbook, build an MVP in 30 days, and measure it against pipeline outcomes before layering on complexity.

- Factors.ai acts as the visibility and intelligence layer that powers these playbooks, connecting account signals, attribution data, and audience orchestration in one place.

I almost threw a coin into the Trevi Fountain the other day…

Then I paused and thought… if I had one B2B wish, what would I even ask for? More budget? Sounds noice. Better CPLs? hmm… tempting. A miracle pipeline spike before quarter end? ALWAYS.

But if we’re being honest, the real wish is way less pipeline-y… it’s this:

“Dear Fountain, I just want my tools to talk to each other.”

Because right now, the CRM is saying one thing, attribution is saying another, and there’s a spreadsheet called “final_final_v3” making decisions it has no business making… everyone’s working hard… but nothing’s working together.

The actual problem is… ZERO coordination.

And that exact mess is where GTM engineering playbooks come in. They’re what turn your stack from a group project that nobody owns into a system that actually runs on shared logic. Marketing, sales, and CS are finally speaking the same language instead of politely shunning each other in meetings.

This blog breaks down five GTM engineering playbooks that fix that. The kind you build when you’re done throwing coins into fountains.

What are GTM engineering playbooks, really?

Let's get the definition out of the way quickly, because most content on this topic overcomplicates it. GTM engineering playbooks are repeatable systems that connect your tools, data, and human actions across revenue teams into workflows that execute daily. They aren't strategy decks. They aren't SOPs sitting in a wiki that three people have bookmarked, and nobody reads. They're living, operational systems with triggers, logic, and outputs.

Think of them the way a pilot thinks about a pre-flight checklist. Every step exists for a reason and in a specific order; skipping one creates downstream risk. The difference is that a playbook doesn't just tell you what to do… it wires the actions together so the sequence actually happens without someone remembering to click a button.

Modern revenue teams need playbooks because the environment has gotten genuinely complicated. Buyer signals are fragmented across LinkedIn, your website, G2, email engagement, and a dozen other surfaces. You've got multiple tools generating data that lives in separate silos. Human handoffs between marketing and sales are slower than anyone admits. Attribution is a mess of conflicting dashboards. And CAC keeps climbing because inefficiency compounds.

The best playbooks address all of this by reducing decision fatigue. When fewer things require debate, your team performs better. An SDR doesn't need to decide which accounts to prioritize if the playbook scores and queues them automatically. A marketer doesn't need to wonder whether a campaign influenced pipeline if the attribution workflow tracks it end-to-end. Playbooks take the thinking that your best operators do intuitively and encode it into a system that runs whether they're on holiday or not.

Here's a simple way to distinguish a playbook from a process doc: a process doc tells you what should happen, while a playbook makes it happen. One is informational. The other is operational. If your "playbook" requires someone to read it and then manually execute every step, it's a process doc wearing a fancy label.

Why do most revenue teams need GTM engineering playbooks (and not one more tool)?

I've lost count of the number of B2B teams I've seen running 10 to 20 GTM tools without any system connecting them. There's always a moment of clarity, usually around quarterly review time, when someone realizes the team has spent six figures on software and still can't answer a basic question like "which accounts are warming up right now?" More software without orchestration doesn't create efficiency. It creates expensive chaos with better-looking dashboards.

I keep coming back to this distinction: tools create capability, but playbooks create outcomes. A buying intent tool with no trigger logic is wasted spend, because it generates signals nobody acts on fast enough. A CRM with bad routing rules leaks pipeline as leads sit unassigned for days while the account shops competitors. An attribution tool with disconnected data produces misleading dashboards that make your best channels look mediocre and your worst channels look productive.

Consider what this looks like in practice. A team might have LinkedIn Ads running, a CRM full of contacts, website analytics tracking every pageview, and email automation nurturing thousands of leads. Yet they still can't answer four essential questions. Which account is warming up? Who should act on it? What channel actually influenced the pipeline? When should outbound get triggered? Those questions don't get answered by buying another tool. They get answered by connecting the tools you already have into a system with shared logic.

The uncomfortable truth is that most tool purchases are a form of procrastination. It's easier to buy a new platform than to sit down and map the workflow that would make your existing stack perform. Playbooks force that mapping exercise. They require you to define inputs, logic, and outputs, and in doing so, they expose the gaps that no amount of software can fill on its own.

Revenue teams that invest in playbook design before tool selection consistently outperform those that don't. The reason is straightforward: when you know what workflow you need, you can evaluate tools against that specific requirement. When you don't, you end up collecting capabilities you never properly deploy.

Playbook #1: How to build a signal-based outbound engine

This is the playbook most teams should build first, because it produces visible pipeline impact the fastest. A signal-based outbound engine replaces the old model of static list pulls and cold blasts with a dynamic system that triggers outbound when buying intent actually rises. These are genuine GTM engineering workflows examples that turn passive data into active sales motions.

Here are some trigger inputs (AKA signals you should be paying attention to)

The engine starts with defining what signals indicate genuine buying interest. Not every website visit matters, but certain behaviors reliably correlate with purchase intent. Here are the most common trigger inputs:

- Pricing page visits from target accounts, especially repeat visits within a short window.

- Competitor comparison page visits, which signal active evaluation.

- Demo page repeat visits, indicating someone is building a case internally.

- Paid ad engagement from target accounts, particularly when matched to high-fit firmographic profiles.

- New funding or hiring signals, which suggest budget availability and growth investment.

- Intent surge topics from third-party data providers, showing research spikes on relevant categories.

None of these signals alone is a slam dunk… the power comes from combining them, so an account that visits pricing twice, clicks a LinkedIn ad, and shows a hiring signal for your buyer persona gets a very different score than one that bounced off a blog post once.

Workflow logic

Once the signals are defined, the workflow follows a clear sequence:

- Identify the account. Match the signal to a named account using IP resolution, cookie data, or ad platform matching.

- Score fit and intent. Layer firmographic fit (industry, size, tech stack) on top of behavioral intent to produce a composite score.

- Enrich the buying committee. Pull contacts associated with the account, focusing on decision-makers and influencers in the relevant department.

- Push to the SDR queue. Route the enriched, scored account into the appropriate rep's working list based on territory or segment rules.

- Auto-create a personalized sequence. Generate an outreach sequence using the signal context, so the first email references what the account actually did, not a generic value prop.

- Slack alert the AE owner. Notify the account executive in real time so they're aware of warming activity, even if the SDR handles initial outreach.

Why does this work tho?

Outbound works best when it behaves like customer success. It responds to signals rather than interrupting strangers. When an SDR reaches out to an account that's already researching your category, the conversation starts from a fundamentally different place. You're not creating awareness. You're entering a conversation that's already happening.

The old model of exporting a list, writing a generic sequence, and blasting 500 accounts a week produced diminishing returns years ago. The signal-based approach flips the ratio: fewer accounts, better timing, higher conversion. Most teams that implement this see reply rates double within the first quarter because the timing improves fairly.

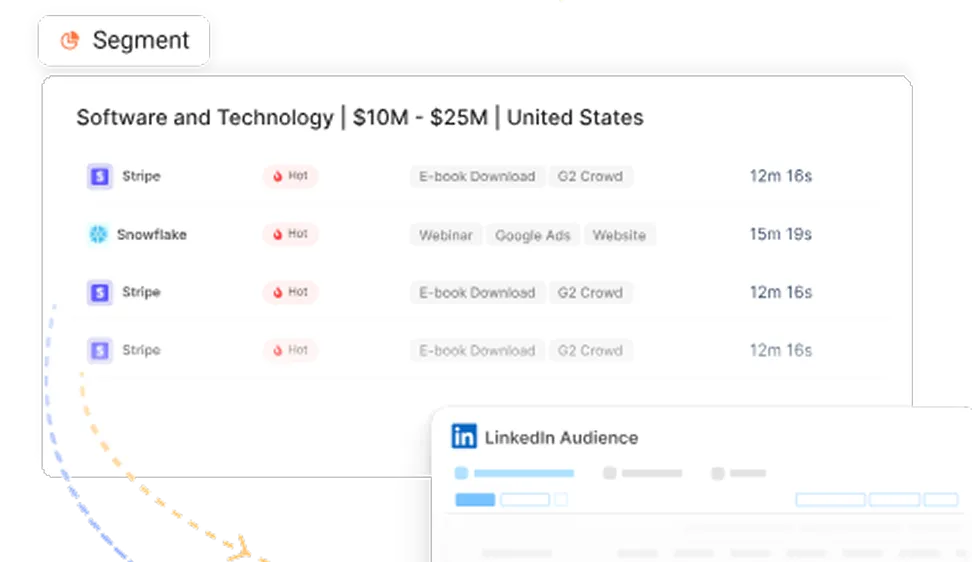

Factors.ai fits naturally here as the account-level intelligence layer. It connects web engagement, ad interaction, and firmographic data to surface the accounts showing genuine interest. Instead of guessing which accounts to prioritize, your SDRs work from a queue that updates based on real-time buying behavior. That's the difference between outbound as a numbers game and outbound as a precision instrument.

Playbook #2: How does a waterfall data enrichment workflow actually work?

Bad data is the silent killer of GTM execution. You can have the best playbooks, the sharpest scoring models, and the most talented reps, but if 30% of your contact records have missing emails, wrong titles, or stale company data, everything downstream underperforms. Most teams blame reps for low conversion when the real issue is missing or stale contact data that nobody bothered to fix upstream.

A waterfall data enrichment workflow solves this by enriching records in a sequential cascade rather than relying on a single provider. The logic is simple: try the first source, and if it doesn't return a match, try the second, then the third, and so on. Each layer catches what the previous one missed.

The enrichment cascade

Here's the standard sequence most teams use:

- Internal CRM data. Start with what you already have. Surprisingly often, the data exists somewhere in your system but isn't mapped to the right fields.

- Primary vendor (ZoomInfo, Apollo, Clearbit, or equivalent). Your main enrichment provider handles the bulk of lookups.

- Secondary vendor. A second provider catches the records your primary misses. Different providers have different coverage strengths by region, industry, and company size.

- LinkedIn or manual scrape/API source. For high-value accounts where automated sources fall short, a lightweight scraping layer or manual research step fills remaining gaps.

- AI normalization layer. Standardize titles, clean company names, deduplicate records, and flag inconsistencies across all enriched data.

Why the waterfall model beats single-source enrichment

The difference in match rates alone justifies the extra setup. Going from 65% to 90% enrichment means your reps are working with substantially more complete data, which translates directly into more meetings booked and fewer dead-end outreach attempts.

The normalization layer at the end is where most teams cut corners, and it's where the real value compounds. Without it, you'll have three variations of the same job title, company names that don't match across records, and duplicate contacts that inflate your list sizes without adding reach. A simple AI normalization step, even a basic script that standardizes common fields, pays for itself within weeks.

Building this as a proper workflow rather than a one-time project matters because your data decays constantly. People change jobs, companies rebrand, and email addresses go stale. A waterfall enrichment workflow that runs on a regular cadence keeps your database healthy without requiring a quarterly "data cleanup sprint" that everyone dreads and nobody finishes.

Playbook #3: How do you unify marketing, sales, and CS routing?

This is the playbook where most companies hemorrhage money without realizing it. Marketing sees product interest from an account. Sales sees risk in an open opportunity. Customer success sees declining adoption. And none of them share signals with each other. The result is that expansion opportunities get missed, at-risk accounts don't get intervention, and the same customer gets three different messages from three different teams in the same week.

The goal of this playbook is to unify marketing, sales, and CS workflows into a shared routing system that operates from a single source of account truth. Revenue teams should manage accounts the way airlines manage flights: one shared control tower where everyone sees the same status, and actions are coordinated rather than duplicated.

The shared account state model

The foundation is a shared account state that updates across teams in real time. Instead of each team maintaining its own list of "accounts to watch," you create a unified scoring and state system:

- Account enters a defined state. States might include expansion opportunity, at-risk, upsell-ready, watchlist, or dormant. These states are triggered by a combination of signals from all three teams.

- Shared score updates across teams. When marketing engagement rises, sales sees it. When product usage drops, CS and sales both see it. No information stays siloed.

- Owner assignment rules trigger automatically. Based on the account state, the right owner from the right team gets assigned. An expansion signal routes to sales. A risk signal routes to CS. A re-engagement signal routes to marketing.

- Tasks get generated by department. Each team receives specific, actionable tasks based on their role. Sales gets a call task. CS gets a health check task. Marketing gets a nurture enrollment task.

- Timeline gets logged in CRM. Every state change, assignment, and action is recorded so the full account history is visible to anyone who looks.

Real-world routing examples

These scenarios happen constantly in B2B SaaS, and most teams handle them manually or not at all:

- Existing customer visits the enterprise pricing page. This triggers a sales expansion alert because a customer browsing a higher tier is a buying signal that CS alone shouldn't handle.

- Open opportunity shows product inactivity. If the prospect stops logging into the trial or free tier during an active sales cycle, that's a risk signal. CS or a solutions engineer should intervene before the deal stalls.

- Customer champion changes jobs. When a key contact moves to a new company, marketing should trigger a nurture sequence for the new organization while CS flags the existing account for relationship risk.

Each of these scenarios involves multiple teams, and each one falls through the cracks when there's no shared routing logic. The unified playbook catches them systematically. I've seen teams recover six-figure expansion revenue within months of implementing shared routing, simply because the signals that were always there finally reached the right person at the right time.

The toughest part of this playbook is the organizational agreement. Getting marketing, sales, and CS to agree on shared definitions of account states, ownership rules, and escalation triggers requires real cross-functional alignment. But once you've built that alignment into an automated system, the coordination happens without recurring meetings about "who owns this account."

Playbook #4: How do you build a multi-touch attribution workflow?

Attribution debates sometimes resemble group projects where everyone claims credit for the final result. The LinkedIn team points to ad clicks. The content team highlights organic traffic. The SDR team claims the cold call booked the meeting. And the truth is that all of them probably played a role, but most teams have no system for measuring how much.

First-touch and last-touch attribution models are simple to implement, which is why they're popular. But they're also fundamentally incomplete. First-touch gives all the credit to whoever caught the buyer's initial attention, ignoring everything that happened between awareness and purchase. Last-touch rewards whatever happened right before conversion, which is usually a direct visit or a sales meeting, and tells you nothing about what built the intent.

What does a modern attribution workflow connect?

A proper multi-touch attribution workflow stitches together the full journey:

- Paid LinkedIn clicks that drove the first interaction with your brand.

- Organic search visits where the buyer researched your category or read your content.

- Webinar attendance that deepened engagement and moved the account into consideration.

- Direct traffic return visits, where the buyer came back to your site by typing your URL, a strong signal of brand recall.

- Sales meetings that converted interest into pipeline.

- Opportunity creation, the moment the deal officially enters your CRM.

Each of these touchpoints contributes to the eventual outcome. The question is how to model that contribution fairly.

Attribution models compared

No attribution model answers every question perfectly, and anyone who tells you otherwise is probably selling one. The most effective approach for B2B teams is to run multiple models in parallel and compare results. When three different models all agree that a channel is underperforming, you can act on that insight with confidence. When they disagree, you've found a channel worth investigating more closely.

Also, I have a thought here… if your dashboard only rewards clicks, your budget will slowly abandon brand, content, and education. Those channels don't generate last-click conversions, but they build the intent that makes every other channel work. A buyer who converts on a retargeting ad after attending two webinars and reading five blog posts didn't convert because of the ad. The ad just happened to be there when they were ready.

Factors.ai fits naturally into this playbook as the unification layer. It connects paid, organic, and direct engagement data at the account level, then models influence across the full journey. Instead of running attribution in a spreadsheet cobbled together from four different platforms, you get a single view of how touchpoints connect to pipeline. That's the kind of visibility that makes budget conversations productive rather than political.

Playbook #5: What do agentic GTM workflows look like for lean teams?

There’s a lot of hype around AI agents right now, and most of it describes a future that doesn’t exist yet. However, AI agents and prompt engineering are already enabling automation and personalization in GTM engineering, allowing teams to streamline processes and optimize customer interactions.

But there’s a type of agentic GTM workflow that lean teams can implement today without building a custom AI platform or hiring a machine learning team. These workflows leverage automated workflows, integrating tools and APIs to increase efficiency and scalability.

Building such systems requires technical skills, such as data management, SaaS integration, and coding, as well as different skills compared to traditional roles, combining technical, business, and strategic expertise to bridge multiple functions.

The key is thinking about agents as task-specific assistants that remove waiting time, not as autonomous systems that replace your team. Real-time iteration and optimization in GTM engineering enables constant refinement of messaging and positioning based on market feedback, applying a systematic approach similar to software engineering. GTM engineers build the underlying systems, playbooks, and automation architectures essential for revenue operations, focusing on initial setup and technical configuration.

Four practical agents you should consider building

- Agent 1: Daily pipeline watcher

This agent scans your active pipeline every morning and surfaces accounts showing changed behaviour. It might flag an account where web engagement spiked, an opportunity where the champion went quiet, or a deal that's been sitting at the same stage for too long. The output is a short summary delivered to the rep's Slack, suggesting next best actions based on what changed overnight. It doesn't make decisions. It reduces the time between "something happened" and "someone noticed."

- Agent 2: Research assistant

Before every meeting, this agent builds a brief on the account. It pulls recent news, funding announcements, tech stack information, relevant LinkedIn activity, and any engagement data from your own platform. Reps spend an absurd amount of time doing this manually, toggling between tabs and copying notes into a doc. An automated brief that lands 15 minutes before the call gives that time back and makes every conversation more informed.

- Agent 3: Hygiene agent

Data hygiene is the task nobody wants to own, which is exactly why it's perfect for automation. This agent runs on a schedule and flags duplicates, stale contact owners, missing required fields, and records that haven't been updated in a defined window. It doesn't fix everything automatically because some decisions require human judgment. But it surfaces the problems so your ops team can address them in batches rather than discovering them mid-campaign.

- Agent 4: Campaign optimizer

This agent monitors campaign performance against pipeline outcomes and suggests budget reallocation. If LinkedIn campaigns targeting enterprise accounts are generating pipeline at a lower cost than mid-market campaigns, the agent flags the opportunity. It can even draft the adjustment for an ops manager to approve. The critical word there is "approve," because automated budget changes without a human checkpoint are a recipe for expensive mistakes.

Guardrails that matter

The difference between useful AI agents and dangerous ones comes down to guardrails. Every agent should have:

- Approval layers for any action that involves spending money, changing data, or contacting a customer.

- Confidence thresholds that suppress recommendations when the data quality is too low or the sample size is too small.

- Shadow mode testing, where the agent runs alongside the human process for a few weeks so you can compare its recommendations against actual decisions before trusting it.

- Human override that's always accessible and never buried behind a settings menu.

Great GTM AI doesn't replace humans. It removes waiting time. The pipeline watcher doesn't decide the strategy. It makes sure your reps see what matters before lunch instead of discovering it next Monday. The research assistant doesn't replace relationship knowledge. It eliminates the 20 minutes of tab-switching that precedes every call. That's the practical version of agentic workflows, and it's available right now.

Zapier vs Make vs n8n: which fits your GTM engineering stack?

Choosing an automation platform is one of those decisions that feels consequential in the moment but matters less than people think. The honest answer about GTM engineering with Zapier vs Make vs n8n is that all three can execute most GTM workflows competently. What matters more is whether your team has clearly defined the workflow before selecting the tool.

That said, there are real differences worth understanding.

My strategic recommendation for you

- If you're a startup running fewer than 10 workflows, Zapier is the fastest path to value. You'll be up and running within an afternoon, and the per-task cost won't sting until you scale.

- For teams building more complex GTM operations with conditional routing and multi-step branching, Make offers a better balance of power and usability at a friendlier price point.

- For serious GTM engineering teams that want full control, n8n is usually the right answer. The self-hosted option means your data stays in your infrastructure, you don't pay per execution, and you can build custom nodes for any integration that doesn't exist natively. The trade-off is that it requires someone on your team who's comfortable with slightly more technical tooling.

I want you to remember that tool choice matters a little less than workflow clarity. I've seen teams achieve excellent results with Zapier and terrible results with n8n, purely because the Zapier team knew exactly what they wanted to automate, and the n8n team was experimenting without a clear workflow design. Define the playbook first, then pick the platform that best executes it.

How Factors.ai fits into these playbooks

Throughout these playbooks, there's a recurring need: a layer that sees across your GTM tools, identifies what's happening at the account level, and makes that intelligence actionable. Factors.ai fills that role. It's not another tool to add to the pile. It's the visibility and intelligence layer that powers the playbooks themselves.

Here's how it maps to the workflows covered above:

- Account identification. Factors identifies engaged accounts from paid, organic, and direct web traffic, giving your signal-based outbound engine its trigger data.

- Audience sync. Warm accounts and segments can be synced directly to ad platforms, so your paid campaigns target the accounts already showing intent rather than broad, cold audiences.

- Sales alerts. When an account crosses an engagement threshold, Factors triggers alerts to the right rep through your existing workflow tools.

- Multi-touch attribution. Factors connects touchpoints across the full buyer journey and models pipeline influence, replacing the spreadsheet attribution that most teams reluctantly maintain.

- Account prioritization. By combining intent signals, firmographic fit, and engagement data, Factors produces a prioritized view that feeds directly into your routing and outbound playbooks.

The positioning here is deliberate. Factors doesn't try to be every tool in your stack. It's the connective tissue that makes your existing tools smarter by giving them shared intelligence about what's actually happening across your accounts. When your enrichment workflow, outbound engine, routing logic, and attribution system all draw from the same account intelligence, the entire GTM operation starts functioning as a single system rather than a collection of independent parts.

How do you build your first GTM playbook in 30 days?

The biggest risk with playbook building isn't choosing the wrong workflow. It's trying to build five simultaneously and finishing none. Teams fail because they launch multiple automations and own none of them properly. The 30-day framework below keeps you focused on one playbook, taken from idea to measured outcome.

Week 1: Audit your funnel leaks

Spend the first week mapping where your current GTM process breaks. Pull your CRM data, look at conversion rates between stages, and identify the biggest drop-offs. Talk to your reps about where deals stall. Ask marketing where leads go dark. The goal isn't to catalogue every problem but to find the one leak that costs the most revenue. That leak tells you which playbook to build first.

Week 2: Choose one workflow only

Pick one playbook. Just one. If your biggest problem is that inbound leads sit unrouted for days, build the routing playbook. If it's that outbound is spraying cold emails at random accounts, build the signal-based outbound engine. If your enrichment data is so bad that reps spend more time researching than selling, build the waterfall enrichment workflow. The hardest part of this week is saying no to everything else. Resist the urge to scope-creep.

Week 3: Build the MVP automation

Wire up the minimum viable version of your chosen playbook. It doesn't need to be perfect. It doesn't need to handle every edge case. It needs to run the core workflow end to end, from trigger to action, without manual intervention. Use whichever automation platform your team already knows. If you spend this week evaluating tools instead of building, you've already fallen behind.

Week 4: Measure what matters

Run the playbook for a full week, then measure it against outcomes that connect to pipeline:

- Response rate on outbound generated by the playbook.

- Speed to follow-up, meaning how quickly does a signal turn into a rep action?

- Opportunity conversion for leads or accounts that went through the playbook versus those that didn't.

- Time saved per rep or per workflow cycle, quantified in hours.

These four metrics tell you whether the playbook is working or needs adjustment. If the numbers look good after week four, document what you've built, assign a permanent owner, and start scoping playbook number two. If they don't, iterate on the workflow rather than abandoning it. The first version is almost never the final one.

Common mistakes teams make with GTM playbooks

Knowing what to watch for saves you from the most expensive lessons.

- Automating broken processes

If your lead routing logic is already flawed, automating it just makes it fail faster. Before you wire up a workflow, make sure the underlying process actually works when executed manually. Automation amplifies what's already there, good and bad alike.

- Buying tools before defining ICP

Your ideal customer profile shapes every playbook, from which signals you track to how you score accounts to which enrichment providers you prioritize. Teams that purchase tools before clearly defining their ICP end up configuring those tools for a target they haven't agreed on, which means rework within months.

- No owner for workflows

A playbook without an owner decays within weeks. Someone needs to monitor it, fix it when integrations break, and update it when the business logic changes. If ownership isn't explicitly assigned, it defaults to "everyone's job," which functionally means nobody's job.

- No alert fatigue controls

Playbooks that send too many notifications create a Slack channel that everyone mutes. Threshold tuning is essential. Your outbound alerts should fire for genuinely warm accounts, not every anonymous website visitor. If reps stop trusting the alerts, the playbook is dead regardless of how well it's built.

- Bad CRM hygiene

Playbooks run on data, and dirty data produces garbage outputs. Duplicate records, inconsistent field values, and outdated contact information corrupt scoring, routing, and enrichment workflows. Invest in hygiene as a prerequisite, not an afterthought.

- Measuring volume instead of pipeline impact

Tracking how many leads got enriched or how many alerts fired tells you the playbook is running. It doesn't tell you the playbook is working. Measure outcomes that connect to revenue: meetings booked, opportunities created, pipeline influenced, and deal velocity.

- No documentation

When the person who built the playbook leaves or goes on holiday, can someone else understand and maintain it? If the answer is no, you don't have a playbook. You have a single point of failure with an automation layer on top. Document the logic, the tool connections, and the decision rules so the system survives personnel changes.

In a nutshell…

GTM engineering playbooks transform your revenue operation from a collection of disconnected tools into a coordinated system where signals flow between teams and actions happen at the right time, ultimately driving growth and enabling predictable revenue growth. The five playbooks covered here, signal-based outbound, waterfall enrichment, cross-functional routing, multi-touch attribution, and agentic workflows, address the most common points where B2B growth teams lose pipeline.

The practical takeaway is simple: start with one growth playbook that addresses your biggest funnel leak. Build an MVP in 30 days using your existing tools and whichever automation platform your team already knows. Measure it against pipeline outcomes, not activity metrics. Assign a clear owner. Document the logic. Then, once it’s stable and producing results, scope the next one.

Tool selection (Zapier, Make, or n8n) matters less than workflow clarity, and Factors.ai can serve as the shared intelligence layer that feeds account signals, attribution data, and audience orchestration into whichever playbooks you build. The teams that win aren’t the ones with the most tools. They’re the ones who connected their tools into systems that run without someone remembering to check a spreadsheet.

Modern GTM engineers focus on building systems that enable scalable growth through automation and integration, rather than just increasing headcount. As companies shift to integrated, cross-functional growth teams, the role of GTM engineers is to own the entire buyer journey, from identifying the right Ideal Customer Profile to building pipeline and converting it into revenue, using a system-driven approach for sustainable expansion.

Frequently asked questions about GTM engineering playbooks

Q1. What are GTM engineering playbooks?

GTM engineering playbooks are repeatable systems that automate and optimize revenue workflows across marketing, sales, and customer success. They connect your tools, data sources, and team actions into end-to-end processes that run daily, replacing manual handoffs and ad hoc coordination with structured, automated logic.

Q2. What is the first GTM playbook to build?

Most teams should start with either signal-based outbound or lead routing, because these playbooks produce visible pipeline impact the fastest. Signal-based outbound shows results within weeks as reply rates improve. Routing playbooks reduces lead response time immediately, which directly affects conversion rates. Choose whichever one addresses your biggest current funnel leak.

Q3. Which tool is best for GTM engineering: Zapier, Make, or n8n?

It depends on your team's scale and technical maturity. Zapier is fastest for small teams with simple workflows. Make offers better value and more powerful branching logic for scaling operations. n8n tends to win for advanced teams that want full control, self-hosting, and no per-task cost. The most important factor isn't the platform but whether your workflow logic is clearly defined before you start building.

Q4. What is a waterfall data enrichment workflow?

A waterfall data enrichment workflow is a sequential enrichment process that runs a record through multiple data providers in order, where each layer catches what the previous one missed. Instead of relying on a single vendor's match rate, the waterfall approach combines CRM data, a primary enrichment provider, a secondary provider, and an AI normalization step to achieve match rates of 85 to 95%.

Q5. How do GTM playbooks help B2B SaaS teams?

They reduce manual work, improve speed to action, and connect activity to pipeline. Specifically, playbooks ensure that buying signals trigger the right team action automatically, enrichment keeps data fresh and complete, routing sends accounts to the right owner in real time, and attribution connects marketing spend to revenue outcomes. The net effect is lower CAC, faster sales cycles, and better cross-functional coordination.

Q6. Can Factors.ai support GTM engineering playbooks?

Yes, particularly for account intelligence, multi-touch attribution, audience sync, and revenue orchestration. Factors.ai acts as the visibility layer that identifies engaged accounts across paid, organic, and direct channels, then feeds that intelligence into your outbound, routing, and attribution playbooks. It connects the signals that most teams currently track in separate tools into a unified account-level view.

See how Factors can 2x your ROI

Boost your LinkedIn ROI in no time using data-driven insights

See Factors in action.

Schedule a personalized demo or sign up to get started for free

LinkedIn Marketing Partner

GDPR & SOC2 Type II

.avif)

.svg)

.avif)

.svg)